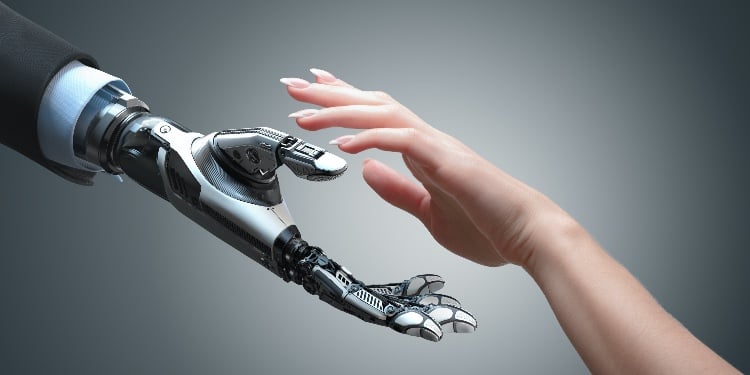

Users want to be in control of artificial intelligence (AI). It should be built in a way that empowers people and organizations, professor Vincent Wade reckons.

Vincent Wade is also the director of ADAPT, SFI Research Centre for Digital Media Technology, which focuses on developing next-generation digital technologies.

“Put people back in control,” the professor said during the Future Human conference in Ireland.

He and his colleagues are envisioning a digitally balanced society for 2030. This means that people should be empowered by technology, AI should be controlled by humans, and users should cease to be treated as a commodity.

Toxic data

Even before the lockdown, people have been increasingly using digital tools. The pandemic accelerated this trend.

“Today, we experience a fusion of the physical world and the digital world. AI and digital media are transforming our experience of social interaction, of identity, of governance, our working environments, and our lifestyles,” he said.

Digital media and AI have become more accurate in terms of decision making in very narrow domains. But the fast digital transformation has its downsides.

We see a lack of control in AI. AI doesn’t actually understand things. Data-driven AI looks at the data and then makes decisions. But it can’t explain what it’s doing. It’s very difficult to control unless you are actually controlling the data itself,

Vincent Wade said.

“We see a lack of control in AI. AI doesn’t actually understand things. Data-driven AI looks at the data and then makes decisions. But it can’t explain what it’s doing. It’s very difficult to control unless you are actually controlling the data itself,” the professor said.

Controlling data is also not very easy. There are a lot of concerns around privacy and decisions built for us, without us actually having any control over them.

From the perspective of data-driven enterprises, it’s also difficult to have control over data because it comes from multiple sources.

“We are talking about data lakes, but a number of them have turned toxic. They can’t be used because of those issues in trust,” he said.

We should not be the product

In the market, there’s a high demand for user attention, and therefore various techniques are deployed to keep a person online.

“Personalization is being used to keep people attentive, to keep them online. In most recent articles around the Social Dilemma, we see that our attention is the product. And that’s not always a good thing,” Vincent Wade said.

According to him, a lot of businesses are building their strategies around the user being the product.

Personalization is being used to keep people attentive, to keep them online,

Vincent Wade.

“We see that the services are free but this is only because the user data is being sold in the background. This is a model which has been around for nearly twenty years. But it’s beginning to evolve, and people begin to say maybe there’s a better way, and it’s open to disruption,” he said.

In the future, the services themselves should become the product.

Personal agents

“We are looking at how we can make AI that empowers individuals, and make them proactive, to integrate AI so that we can stay in control,” the professor said.

Enterprises would also benefit from this empowerment of individuals, rather than just trying to automate the individual.

“We think of a digitally enhanced human as somebody who is empowered to confidently and seamlessly use and interact with digital media. And digital media and AI can extend their profitability, efficiency, and user satisfaction,” Vincent Wade said.

ADAPT institute is also looking at next-generation personal agents.

“These are agents that go out and do things for you. Gather the information, perform the task, represent us in the digital world, but do this under our control. Do it in a way where we are the ones making sure that the actions are as we would do in the real world,” he said.

From an algorithmic perspective, researchers are trying to figure out how to extract knowledge from streams of text, image, video, virtual reality (VR), augmented reality (AR), and speech.

“We think of speech as a new landing page where we want users to ask what they want in a much more natural way. Media is becoming much more blended and much more multi-modal. We can deliver the media in whatever form, whatever context that the user wants,” he said.

The bottom line is that users want to be in control of AI and be able to trust AI. People have privacy concerns, and they are worried about being the product themselves.

“We see this revolution towards human-centric AI. There's a lot of automation in using AI and digital media. But users want to be in control,” he said.

What is important, researchers are looking at how to do more with AI with less data.

“We should not have these massive lakes of data to make good decisions,” he explained.

Your email address will not be published. Required fields are markedmarked