The rapid development of generative artificial intelligence (AI) tools got a boost after the public release of ChatGPT. Using generative AI technology, anyone can create fake content, including photos, videos, and text. While this is considered a technological advance, it raises serious concerns when different parties exploit it to spread fake news and propaganda.

Fake media generated using AI tools can cause severe damage. For instance, it can threaten the legitimacy of elections, impact stock markets, and cause profound reputation loss to affected parties.

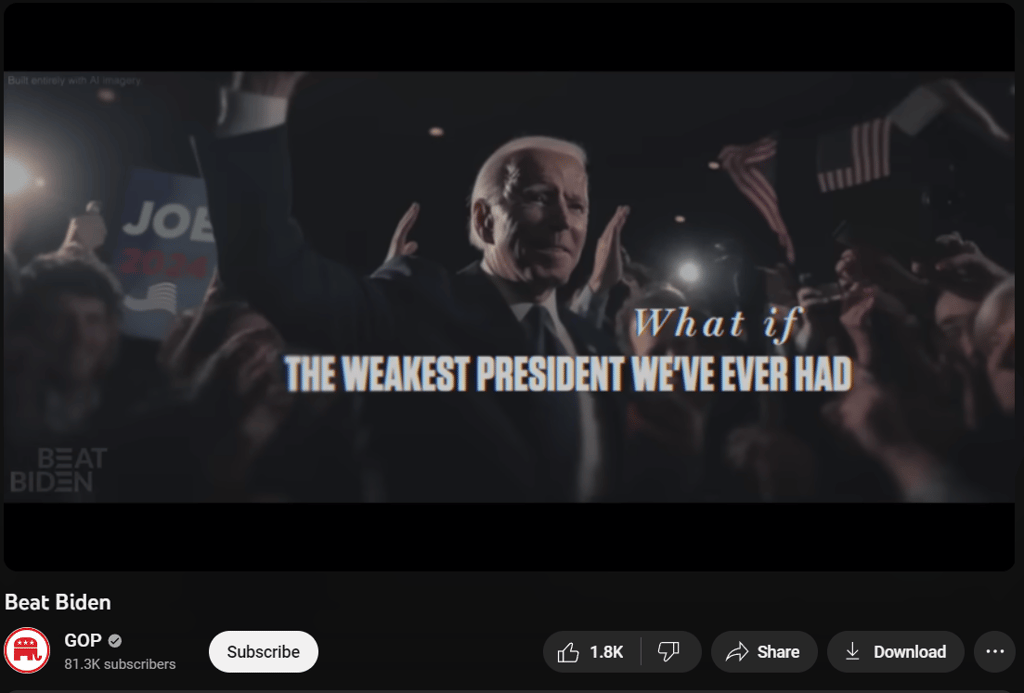

An excellent example of how AI tools can affect elections is the advertisement created by the Republican National Committee (RNC) using generative AI technology. The ad imagines a series of consequences on the international landscape, such as China invading Taiwan and other domestic crises, if Joe Biden is re-elected in 2024.

This is the RNC's first creation of a political ad using AI tools, but AI tools are expected to be regularly used by all parties in the upcoming US elections.

AI tools for generating content

There are numerous AI tools to generate fake media content. The most popular well-established are:

- Midjourney: to create digital art and images using AI.

- Imagine: to generate digital art using text commands.

- DALL·E 3: to generate digital art using text command prompts written in natural language. Available as part of the ChatGPT Plus subscription.

- Dreamstudio: to generate images and add new effects to current images.

- Adobe Firefly: to create high-quality output such as images and text effects, and add or remove objects from images.

Negative consequences of AI-generated deepfakes

Various threat actors can use deepfake AI technology in different scenarios for malicious purposes. Here are some possible consequences of using this technology:

- Spreading fake news on a large scale, such as impersonating political figures to create fabricated statements. This can severely impact voters and change the entire course of the election.

- Spreading confusion and untrust of media news in the target society or country because people will become unaware of what they should believe.

- State-sponsored attacks using deepfake content could be directed by foreign intelligence agencies to spread chaos in target countries.

- Impacting the political agenda of political leaders and setting them apart from the public because they become afraid to speak publicly to avoid changing their speeches with AI tools.

- Generative AI tools, such as ChatGPT, can generate a large volume of fake content in a small amount of time. The generated news can be spread across the internet to mislead the public on a wide scale.

- The most dangerous impact of deepfake content is the propagation of public distrust in traditional media channels, such as TV, radio, and the internet. Generating AI tools can create convincing fake content (both visual and audio) that cannot be distinguished from those produced by humans. People may come to distrust media news because they cannot verify its authenticity.

Beyond politics: AI in various scenarios

While much focus has been on the political impacts of deepfakes, this AI-enabled deception also presents major risks across other areas of society. Some examples of how artificially generated fake content could potentially cause harm include:

- Deepfake technology, which can be used in corporate espionage by impersonating executive managers' sounds or their personal photos to conduct counterfeit communications. For example, to send false orders to other employees to initiate unauthorized wire transfers or to steal sensitive business information.

- Deepfake technology can also be used to craft advanced social engineering attacks to convince individuals or employees to give sensitive information to allow access to sensitive computing resources.

- AI tools can be used to impersonate innocent people's photos and make them, for example, look like they’re in custody by law enforcement to damage their reputations.

- On the social media side, AI bots can be used to create a large number of fake accounts on major social media platforms and populate these accounts with AI-generated photos and content to look authentic. These accounts will be used to spread news targeting individuals or companies.

- In the entertainment sector, deepfake technology was used widely to attack celebrities. For example, swapping a celebrity face to appear working in the adult industry can impact its reputation. Such videos go viral across the internet very quickly.

Social media manipulation and freedom of speech in the age of generative AI technology

While AI technology provides numerous opportunities in different disciplines, we noticed that it can be greatly abused by nation-state and non-nation-state actors to manipulate social media content and use it against the freedom of the press.

For instance, a recent report published by Freedom House, a well-known human rights group, found that governments in at least 16 countries widely use generative AI to mislead the public with fake information or impose more substantial censorship on online opponents' content.

This significantly improves the tools available to governments to conduct digital oppression.

Generative AI technology and the underlying Machine Learning (ML) models will enable governments to boost their restrictive measures against journalism and free of speech for two reasons:

- The ease of accessibility to AI tools allows anyone, even entities in poor countries, to leverage AI tools in different use cases, including spreading misinformation and manipulating social media

- Using AI and ML will not only help create fake content, but it can be significantly used to enhance technological censorship and prevent unauthorized online content from reaching internet users in specific geographical locations

For example, Venezuelan state media outlets used AI services from Synthesia, a London-based artificial intelligence company, to create fake videos. According to The Washington Post, the clips that appeared on the Venezuelan state-owned television station VTV are taken from a YouTube channel called House of News (it has gone offline now), which pretends to be an English-language media outlet. However, investigating its content further revealed it was promoting the Venezuelan government’s messaging online.

Returning to the US, the Biden clip we discussed was not the only one created using deepfake. For instance, another video within the same context (misleading the public) has been released by Florida Governor Ron DeSantis's presidential campaign.

The clip seems to utilize images generated by AI, imagining a scenario where former President Donald Trump is hugging Dr. Anthony Fauci. If we extract individual images from the video and conduct a reverse image search using services like Google image search and TinEye, we will not get any results. This further confirms the video was generated using AI technology.

Aside from spreading deepfake content to mislead the public, more governments are using AI and ML technologies to interfere in social media discussions to promote their political views. According to the Freedom House report, at least 47 governments deployed commentators to spread propaganda in 2023.

On the other hand, AI and ML tools are used increasingly for content filtering. For instance, ML provides an efficient way to filter online content, especially on social networking and other discussion forums websites.

AI technology has radically changed how content filtering works by introducing automated systems that can analyze and categorize massive volumes of digital data in real-time. AI filtering systems use complex algorithms and machine learning models to identify and filter out content that violates pre-established rules. Their ability to learn continually makes AI tools more powerful in this domain.

For instance, traditional filtering methods cannot learn from trends and patterns, while AI and ML tools can adapt according to their working environment and learn from users' behaviors to continually improve their capabilities.

In China, the government has pre-programmed chatbots, especially the new text-to-image AI-powered tool (ERNIE-ViLG) developed by Baidu, to avoid answering questions about the Tiananmen Square incident. Other countries, like Iran, are using ML to strictly filter online content, especially after the last uprising.

The interesting thing about leveraging ML technology in online censorship is that governments use it on two sides:

- To interfere in the training data used to train the ML models; this allows them to control their output regarding different matters.

- To restrict access to AI chatbots entirely if they cannot control their output.

Regardless of the method used to utilize ML technology in censorship, it seems that governments will use AI and ML at scale in the future to control online content and spread propaganda for their interests.

Your email address will not be published. Required fields are markedmarked