News

Missing nuclear lab worker found dead as UFO theories swirl

A nuclear lab worker who had been missing for over a year has been found dead with a shotgun beside her, with authorities currently unable to specify the cause of her death.

Read more about Missing nuclear lab worker found dead as UFO theories swirl

Amazon moves Prime Day to June and extends shopping event to four days

Amazon.com will host its annual Prime Day sales event from June 23 to June 26 after launching the event in July for the past five years, citing major holidays and sporting events as factors in its decision.

Read more about Amazon moves Prime Day to June and extends shopping event to four days

Meta-Manus deal triggers China crackdown on US tech acquisitions and gives power to reverse foreign deals

China issued sweeping new rules on Monday tightening control of overseas deals that involve Chinese investors, technology, data and national security, a month after Beijing ordered Meta to unwind its acquisition of AI startup Manus.

Read more about Meta-Manus deal triggers China crackdown on US tech acquisitions and gives power to reverse foreign deals

Hackers breach Obama White House Instagram years after it went silent

An Instagram account assigned to former President Barack Obama, which has been inactive for almost a decade, has been hacked. Several AI-generated images were posted, believed to be of Iranian origin

Read more about Hackers breach Obama White House Instagram years after it went silent

Your next BMW could be built by a humanoid robot

BMW will start using humanoid robots on production lines at its Leipzig factory in Germany this summer.

Read more about Your next BMW could be built by a humanoid robot

UFO hype explodes after White House tease

This week, The Cosmic report rounds up a cryptic White House UFO post, as well as disclosure statements from a former director of aviation security and a NASA administrator.

Read more about UFO hype explodes after White House tease

Wikipedia editors threaten strike after Wikimedia layoffs

Layoffs in Wikimedia’s six-person Community Tech team sparked backlash from hundreds of Wikipedia editors who are now discussing strike action.

Read more about Wikipedia editors threaten strike after Wikimedia layoffs

Estonian police could soon gain powers to demand your photos and videos

Estonia is considering a new law that would allow police to gain access to people’s photo and video files. Under the new proposal, the usage of drones for surveillance would also become legal.

Read more about Estonian police could soon gain powers to demand your photos and videos

Think the internet is toxic? These EU countries have it the worst

In 2025, 42.3% of EU-based internet users encountered hostile content online – here are the most affected countries.

Read more about Think the internet is toxic? These EU countries have it the worst

Apple Music down across multiple countries in third outage since April

Apple Music was down Friday across several countries — the popular music streaming service’s third disruption in two months.

Read more about Apple Music down across multiple countries in third outage since April

Blue Origin New Glenn rocket explodes during Florida launchpad test

Jeff Bezos’ Blue Origin suffered a major setback after its towering New Glenn rocket exploded during a hot-fire test in Florida on Thursday – the dramatic blast coming as Blue Origin seeks to narrow the gap with Elon Musk's IPO-bound SpaceX.

Read more about Blue Origin New Glenn rocket explodes during Florida launchpad test

AI mania sends Silicon Valley home prices soaring: AI stock is now starting to replace cash offers

New data shows Silicon Valley’s AI boom is minting a new class of startup millionaires and sending home prices through the roof – with some sellers even asking buyers for shares in companies like Anthropic instead of millions in cash.

Read more about AI mania sends Silicon Valley home prices soaring: AI stock is now starting to replace cash offers

Richard Dolan thinks UFO culture has a personality problem

With much of the UFO discussion these days about which politician or whistleblower said what, UFO historian Richard Dolan has observed that more of the focus should be on paranormal events themselves, and less on hyperbole.

Read more about Richard Dolan thinks UFO culture has a personality problem

Google’s AI deletes manga artist’s entire digital life overnight

Popular Japanese manga artist Masahiro Itosugi says his Google account was automatically suspended, and that old transcripts were wiped in the process.

Read more about Google’s AI deletes manga artist’s entire digital life overnight

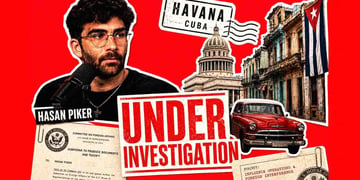

“They’re still after your boy:” Hasan Piker subpoena sparks Reddit firestorm

Left-wing Twitch influencer Hasan Piker has responded to federal authorities issuing a subpoena regarding a March trip to Cuba, in which he helped deliver roughly 20 tonnes of humanitarian aid.

Read more about “They’re still after your boy:” Hasan Piker subpoena sparks Reddit firestorm

Spanish court disagrees with La Liga over possible fines to NordVPN

It’s not over, but it’s something. That’s how the bosses at NordVPN are interpreting the news from Spain, where the Commercial Court of Cordoba has refused to punish the VPN company for alleged non-compliance with an order to block pirate football streams.

Read more about Spanish court disagrees with La Liga over possible fines to NordVPN

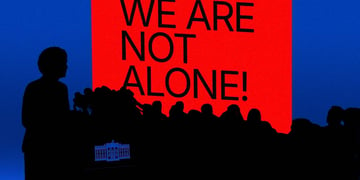

Ross Coulthart: Trump poised to reveal "we are not alone" on non-human intelligence

Following President Trump's second release of UFO evidence last Friday, leading journalist Ross Coultart is anticipating a huge announcement very soon from the White House, potentially stating that “we are not alone.”

Read more about Ross Coulthart: Trump poised to reveal "we are not alone" on non-human intelligence

Wingtech files lawsuit in Chinese court, seeks $1.1 billion in damages and full control of Nexperia

Wingtech Technology has filed a lawsuit against Nexperia in China, demanding to regain full control of the company. In addition, Wingtech is seeking approximately $1.1 billion in damages.

Read more about Wingtech files lawsuit in Chinese court, seeks $1.1 billion in damages and full control of Nexperia

Federal regulators sue after Minnesota bans prediction markets

Minnesota became the first US state to ban prediction markets – and quickly faced a federal lawsuit.

Read more about Federal regulators sue after Minnesota bans prediction markets

UK MPs slam digital ID rollout as a “fiasco” following rushed launch

The UK government’s digital ID rollout has been slammed as rushed and damaging to public trust in a parliamentary report released this week.

Read more about UK MPs slam digital ID rollout as a “fiasco” following rushed launch