Tech

Meta has quietly shipped the technology needed for facial recognition glasses

The technology needed to recognize people through Meta’s smart glasses is already sitting on millions of phones, according to new research by an independent researcher known as Buchodi.

Read more about Meta has quietly shipped the technology needed for facial recognition glasses

EU digital sovereignty plan faces reality check: can Europe stand on its own?

The continent still relies heavily on US cloud providers, AI models, and semiconductor tech. What are the chances of it succeeding on its own?

Read more about EU digital sovereignty plan faces reality check: can Europe stand on its own?

Beyond Qwant: what are European alternatives to Google Search?

There’s no shortage of European alternatives to Google Search, but some rely on Google and Bing indexes, raising concerns about whether they are truly sovereign.

Read more about Beyond Qwant: what are European alternatives to Google Search?

Experts say EU remains years away from technological independence despite sovereignty plans

As the European Union unveiled its technology sovereignty package on Wednesday, a top official posted in glee: "Today is Tech Liberation Day". True technological independence from US Big Tech, however, will take longer to attain.

Read more about Experts say EU remains years away from technological independence despite sovereignty plans

No age-check technology can make children completely safe online, think tank warns

As the UK edges closer to age-gating the internet, a think tank has warned that no technology can provide a “magic fix” for online harms – and politicians need to be more honest about the limitations of age-assurance tooling.

Read more about No age-check technology can make children completely safe online, think tank warns

The Dutch defense ministry wants to stop using Palantir

The Netherlands Ministry of Defense is considering replacing controversial US data broker Palantir’s software in the next two years.

Read more about The Dutch defense ministry wants to stop using Palantir

Messaging apps will kiss you on one cheek and slap you on the other with E2EE

Messenger, Line, and WeChat will happily lure users with the promise of end-to-end encrypted messaging, while quietly creating highly detailed profiles of their lives.

Read more about Messaging apps will kiss you on one cheek and slap you on the other with E2EE

European Parliament switches default search engine from Google to Qwant

The European Parliament will switch to French search engine Qwant from Google, it said on Wednesday, underscoring Europe's push to reduce its reliance on US technology in favour of local alternatives.

Read more about European Parliament switches default search engine from Google to Qwant

No more calls from fake mom: Google releases Android fake call detection feature

Google is set to launch a feature for Android phones that can tell you if a phone call from your mom is actually from her.

Read more about No more calls from fake mom: Google releases Android fake call detection feature

German state Bavaria cancels Microsoft contract to go open source

The administration of the largest German state, Bavaria, is moving away from Microsoft software to pursue a “sovereign basic workspace.”

Read more about German state Bavaria cancels Microsoft contract to go open source

Google seeks EPA approval to release over 64 million mosquitoes in the US

Google has applied for an experimental use permit (EUP) with the US Environmental Protection Agency (EPA), allowing the tech company to release tens of millions of laboratory-bred mosquitoes in California and Florida.

Read more about Google seeks EPA approval to release over 64 million mosquitoes in the US

Can GoPro survive? The company says it has “substantial doubt” about its future

Due to factors beyond the company’s control, GoPro faces serious liquidity issues. Therefore, the camera manufacturer is even considering a potential sale or merger of the business.

Read more about Can GoPro survive? The company says it has “substantial doubt” about its future

German police may be using advertising data unlawfully obtained from brokers

Criminal investigation offices in at least 2 German states are using data obtained from brokers to gather information about suspects.

Read more about German police may be using advertising data unlawfully obtained from brokers

Is Apple creating its own Splitwise?

Apple is reportedly working on a Splitwise-like feature that would only require taking a picture of your bill.

Read more about Is Apple creating its own Splitwise?

Dutch watchdog files complaint over Flo collecting and selling intimate data

The Dutch privacy rights group Bits of Freedom says the popular period-tracking app Flo is commercializing users’ intimate data.

Read more about Dutch watchdog files complaint over Flo collecting and selling intimate data

Microsoft backs down after zero-day dispute, says it won’t sue security researchers

Microsoft seeks to reduce tensions with the cybersecurity community and says it has no intention of suing security researchers. The move comes after the company received pushback over its response to public disclosure of a few Windows zero-day vulnerabilities.

Read more about Microsoft backs down after zero-day dispute, says it won’t sue security researchers

How OpenAI and Anthropic compete to headhunt top AI talent that already has it all

The equivalent of big-name transfers in soccer has taken place in the AI world in recent weeks – but how do you convince staff who have it all to shift?

Read more about How OpenAI and Anthropic compete to headhunt top AI talent that already has it all

Doomsday plan? Tech billionaire and Palantir cofounder Peter Thiel relocates to Argentina

Venture capitalist Peter Thiel is reportedly putting down roots in Argentina. This reflects a broader trend of prominent American tech billionaires exploring safe havens in case things go south in the US.

Read more about Doomsday plan? Tech billionaire and Palantir cofounder Peter Thiel relocates to Argentina

13 European cloud providers back EU push to reduce reliance on US tech

Thirteen European cloud providers joined forces with a group of EU lawmakers and NGOs on Monday to back the European Commission's drive to cut the region's dependence on US technologies and support local businesses.

Read more about 13 European cloud providers back EU push to reduce reliance on US tech

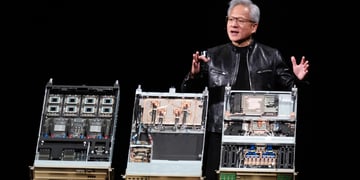

Nvidia launches PC chip to bring AI directly to personal computers

Nvidia on Monday unveiled a new chip that puts AI capabilities directly into laptops and desktop computers, to be delivered this fall, which experts said would overhaul how users engage with AI.

Read more about Nvidia launches PC chip to bring AI directly to personal computers