Editorial

The “ancestral voice” of Mama Graves is AI, but does that ruin the message?

Would you still resonate with a brilliant spoken word poet if you found out they were AI-generated?

Read more about The “ancestral voice” of Mama Graves is AI, but does that ruin the message?

GPTZero says AI “vibe citations” are spreading through big firms

As AI continues to develop at lightning speed, researchers at GPTZero are growing increasingly concerned that the trend of artificial misrepresentation will spread across businesses as corporations continue to scale up their operations.

Read more about GPTZero says AI “vibe citations” are spreading through big firms

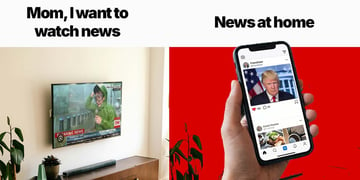

If I relied on Instagram for news

Dinner-table conversations at our house are weird. While my partner and I are both journalists, the stuff he learns throughout the day is completely different from what I’m exposed to, making a good example of how profoundly personalized our news streams have become.

Read more about If I relied on Instagram for news

When AI feels like an insult

To me, while AI can definitely help us get things done faster, human effort and creativity are more meaningful than results alone.

Read more about When AI feels like an insult

Build a powerful Hermes Agent on your gaming PC for free: here’s how I did it

Everyone is now running AI agents, spending hard-earned cash on Claude, GPT, or Gemini tokens. But what if I’m a cheapskate and care about my privacy? I transformed my gaming computer into a powerful AI workstation that runs a Hermes Agent when I’m not gaming, and it blew past my expectations.

Read more about Build a powerful Hermes Agent on your gaming PC for free: here’s how I did it

Pineapples in the sky – UFO chunks from Burlison, Tyson, and Ocasio-Cortez

This week, The Cosmic Report rounds up what some of the leading figures in the UFO disclosure conversation have been saying, as the suspense mounts for the next batch of evidence released.

Read more about Pineapples in the sky – UFO chunks from Burlison, Tyson, and Ocasio-Cortez

Is death a failure?

“Bring out the alien” – the UFO community has lost patience with trolls

Renowned astrophysicist Neil DeBrasse Tyson was promoting his new book Take Me To Your Leader this week, which states that the author “wants to meet the aliens as badly as you do.” What did Tyson mean by this? The Cosmic Report investigates, as well as another eccentric individual.

Read more about “Bring out the alien” – the UFO community has lost patience with trolls

Documenting everything everywhere all at once

Endless personal digital archives of every rendezvous, every flower, the first snowflake, screenshots of funny or infuriating conversations, memes, recipes, and notes can suddenly start to feel like we’re creating a case against ourselves that would help someone win against us in court.

Read more about Documenting everything everywhere all at once

Our (futile) quest to revive meaning in an AI-dominated world

AI is shoving an uncomfortable truth down our throats: we might not be as special as we think we are.

Read more about Our (futile) quest to revive meaning in an AI-dominated world

From breadcrumbs to nothingburgers: another confusing week of UFO disclosure

Donald Trump has reaffirmed his promise of releasing the UFO files, as he welcomed the Artemis II astronauts to the Oval Office.. Meanwhile, more information has emerged regarding the “missing scientists” conspiracy theory.

Read more about From breadcrumbs to nothingburgers: another confusing week of UFO disclosure

Is it me, or is everything on the internet a bloody ad?

Blocking annoying third-party ads and pop-ups is easy enough. But honestly, brands still seep into our everyday conversations about everything, from discussing the sub-2-hour marathon through the lens of Adidas supershoes to dermatologists recommending nickel-free cosmetics for acne issues. When ads become hyperpersonalized and hard to recognize, trust ebbs, and everything starts to feel like a trap.

Read more about Is it me, or is everything on the internet a bloody ad?

Palantir’s manifesto: Will Britain confront its tech dependence on the US?

A controversial manifesto by American spy-tech company Palantir has renewed calls in the UK to follow the EU and pursue digital sovereignty.

Read more about Palantir’s manifesto: Will Britain confront its tech dependence on the US?

I asked OpenClaw to analyze stocks, but it failed and killed itself

I was recently experimenting with a new small AI model, Qwen3.6-35B, which, IMHO, is a much more exciting development compared to Anthropic’s Mythos.

Read more about I asked OpenClaw to analyze stocks, but it failed and killed itself

Musk might be the villain, but what he’s doing with Neuralink will probably make you cry

While the world burns, Elon Musk is changing lives. Whether you love or hate him, the billionaire’s dream of telepathy is giving back to those who need it most.

Read more about Musk might be the villain, but what he’s doing with Neuralink will probably make you cry

Do you want me to wipe my makeup and talk to you about cybersecurity from my bedroom?

There’s a new “cool” thing to do if you want to be heard. It’s also troubling on so many levels.

Read more about Do you want me to wipe my makeup and talk to you about cybersecurity from my bedroom?

World in crisis: why is JD Vance talking about aliens and can you trust your code?

It’s been a whirlwind week for the world and here’s a recap of some of the stories you may have missed.

Read more about World in crisis: why is JD Vance talking about aliens and can you trust your code?

Gen Z didn’t grow up with slow: how AI, TikTok, and Discord reshape attention

TikTok, Discord, ChatGPT, 1.5x speed. Gen Z grew up at a fast pace, and now they're crushing slow brands, dodging ads like antibodies, and rewiring tech at warp speed. Keep up or get left in the dust.

Read more about Gen Z didn’t grow up with slow: how AI, TikTok, and Discord reshape attention

You won’t find a diamond in your suggested Instagram reel

You can clean your browsing history as much as you want to try to get a more positive social feed. But enraging content will always find a way. And when it does, big tech platforms start earning big bucks.

Read more about You won’t find a diamond in your suggested Instagram reel

The US job market is ignoring AI: lawyers, engineers, and other pros are in high demand

Brutal tech layoffs were announced in January, spearheaded by Amazon’s 16,000-job cut. The headlines write themselves: AI is displacing white-collar workers. Yet, many industries in the US that are supposed to shrink under AI pressure just keep adding jobs, despite other economic and geopolitical uncertainties.

Read more about The US job market is ignoring AI: lawyers, engineers, and other pros are in high demand